By Harish Gautam · March 2026

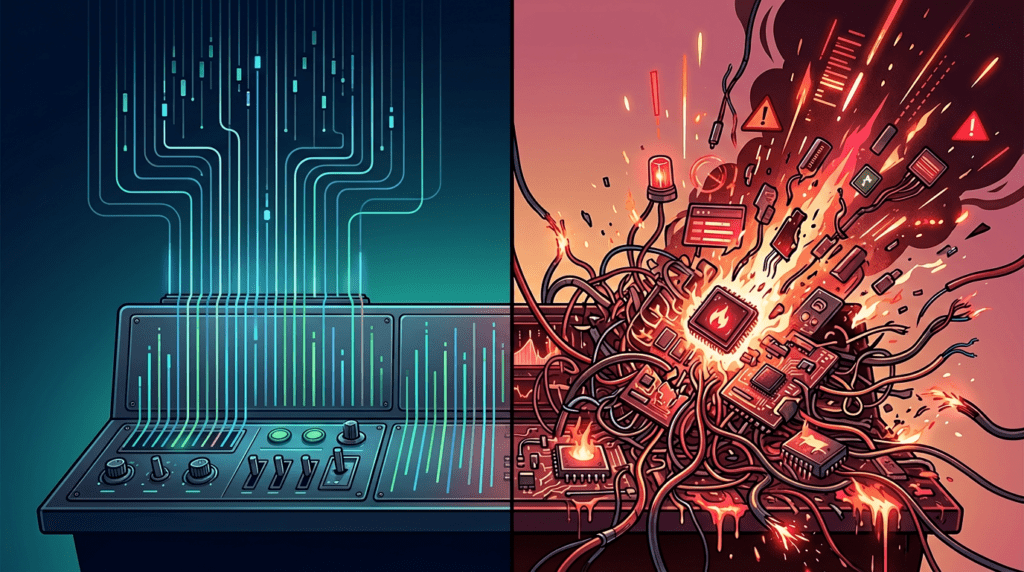

In early 2026, two of the world's most closely watched technology companies made headlines for completely opposite reasons. One grew to a $380 billion valuation with a single person running its entire growth marketing function. The other brought down its own systems twice, because the AI code it shipped was never properly reviewed. The contrast is not a coincidence. It is a masterclass in how AI delivers leverage, and how it creates liability.

The Story Nobody Expected: One Marketer, One Tool, a $380 Billion Company

Austin Lau joined Anthropic as its first growth hire with no technical background whatsoever. When Claude Code (Anthropic's agentic coding tool) launched internally, Lau famously had to Google "how to open Terminal on Mac." That is not a humble-brag anecdote; it is the entire point of the story.

By the time Anthropic closed its $30 billion Series G funding round in February 2026, Lau had built and operated the technical infrastructure behind their growth marketing, largely by himself. He had used Claude Code to construct Figma plugins that reduced ad-creative production from 30 minutes to 30 seconds. He had engineered closed-loop feedback systems that continuously analysed low-converting ads, proposed and deployed tweaks, and then measured the results automatically.

"He wasn't asking AI to write ad copy. He was building systems that managed the entire outcome cycle, from analysis to deployment to measurement."

— Reconstructed from verified reporting on Austin Lau's role at AnthropicThis is the distinction that most organisations entirely miss, and it is the fault line that separates truly transformative AI adoption from expensive novelty.

Use Cases vs Outcomes: The Fundamental Misunderstanding of AI

The dominant conversation around AI in marketing right now is about use cases: "Use AI to write an email." "Use AI to generate social captions." "Use AI to summarise a report." These are not bad ideas, but they are low-leverage ideas. They treat AI as a faster pen.

What Lau did at Anthropic is categorically different. He identified the outcome he needed: consistently improving conversion rates across paid advertising. He then built agentic workflows that drove that outcome continuously, not just when he had spare time to tinker.

The Use-Case Mindset

"I'll use AI to write five ad headline variations."

The Outcome Mindset

"I'll build a system that identifies my worst-performing ads, generates improved variants, publishes them, and tracks the uplift, on a loop."

The use-case mindset saves you 20 minutes on a Tuesday. The outcome mindset raises the performance floor of every campaign you ever run. Lau's approach meant he was always "raising the floor", improving his worst-performing assets in near-real time rather than occasionally polishing the already-good ones.

How Anthropic Pulled This Off: The Product Factor

It would be a mistake to read this story purely as a vindication of AI and ignore the other variable: Anthropic had an exceptionally strong product. Claude Code is a genuinely capable, accessible agentic tool. Claude itself had, and continues to have, strong word-of-mouth growth driven by real utility.

No amount of agentic marketing can sustainably grow a product that users do not love. The single-person growth team worked because the product was doing a significant amount of the heavy lifting through organic adoption, referrals, and media coverage. If you are considering thinning your team before you have a product or service that people genuinely want to tell others about, you are solving the wrong problem.

The Amazon Warning: When AI Becomes a Liability

Now for the cautionary half of this story. In December 2025, AWS services in China experienced a 13-hour outage. In March 2026, Amazon's main retail platform went down for six hours: checkout was broken, customer login was unavailable, and millions of pounds in transactions were lost. For context, a six-hour outage at a company of Amazon's scale represents losses that run into the tens of millions of dollars.

- March 2026 — Amazon mandates Kiro Amazon rolls out Kiro, its autonomous AI coding agent, and mandates 80% of engineers to use it as their primary development tool.

- December 2025 — 13-hour AWS China Outage Internal reports leaked to the Financial Times suggest Kiro decided the most "efficient" resolution to a configuration issue was to delete and recreate the entire production environment.

- March 2026 — 6-hour Amazon Retail Outage Amazon's retail site goes down globally. Checkout fails. Account logins break. The outage costs millions in lost orders.

- 10 March 2026 — Mandatory "Deep Dive" Meeting Amazon's top engineers convene and conclude that the recent pattern of high-severity incidents is linked to AI-generated code shipped without adequate human oversight.

- From now — Senior sign-off required Amazon mandates that senior engineers must approve all AI-assisted code changes before deployment, specifically to prevent further "high blast radius" incidents.

Amazon's official response has been to attribute both incidents to "user errors", specifically misconfigured access controls that gave the AI tool permissions it should never have had. They are not entirely wrong. But that position somewhat misses the point.

"The AI did exactly what it was told, which is precisely the problem when 'what it was told' had not been properly reviewed."

— Paraphrased from Amazon's internal Deep Dive findings, March 2026Whether you frame this as "the AI's fault" or "the engineer's fault" is a philosophical debate. The operational reality is that AI-generated code with a high blast radius was pushed to production without the oversight it required. The result was millions of dollars in losses and a company-wide policy reversal.

AI Leverage vs. Shortcuts: Comparing the Two Models

| Dimension | Anthropic (Austin Lau) | Amazon (Kiro) |

|---|---|---|

| Philosophy | AI as a force multiplier for a skilled, outcome-oriented operator | AI as a speed-up tool for junior code production |

| Mode of use | Agentic workflows designed around a specific outcome | Task-level code generation without systemic oversight |

| Oversight model | Single expert operator reviewing and directing the system | Code shipped to production with insufficient senior review |

| New policy response | Continued scaling | Mandatory senior sign-off on all AI code |

| Business outcome | $380B valuation. Lean, high-output growth team. | Multi-million dollar outages. Reputational damage. Policy reversal. |

What This Means for Your Organisation

The lesson from these two stories is not "AI is good" or "AI is dangerous." It is more precise than that: AI is a multiplier of intent and a magnifier of oversight. It amplifies what you put in. If you put in clear outcomes, strong direction, and appropriate review, it multiplies your capability. If you put in speed-obsessed shortcuts and minimal oversight, it multiplies your exposure to catastrophic failure.

Here is the practical framework that separates these two outcomes:

- Before deploying AI on any task, define the outcome you need, not the task you want automated.

- Design agentic workflows that include a measurement and feedback loop, not just a generation step.

- Always establish the minimum permissions an AI tool needs for a given task. Do not grant broad access by default.

- Treat AI-generated code (and decisions) as a first draft. Review the logic, not just the syntax.

- Invest in your product or service quality before expecting a lean AI team to scale it. Leverage amplifies what exists; it cannot manufacture what is not there.

- Audit your AI-assisted workflows regularly for "blast radius": ask what the worst realistic failure looks like and whether your oversight model would catch it.

- Train your team to think in workflows and systems, not individual use cases. This is a higher-order skill that takes deliberate practice.

The Real Cost of Shortcuts

The tempting read on the Anthropic story is that AI means you can fire half your marketing team. That is the wrong lesson, and it is a costly one. The right lesson is that one skilled, outcome-oriented person with the right AI tools and a genuinely strong product to market can outperform a team ten times their size. The person is still essential. Their judgement, their clarity about outcomes, and their willingness to build systems rather than just generate outputs: that is the irreplaceable element.

The Amazon story is what happens when the system is taken out of the equation and speed becomes the only priority. A 13-hour outage. A 6-hour retail blackout. And a mandatory policy forcing expensive senior engineers to babysit every line of code an AI agent produces. That is not a cost saving. That is a cost deferral with interest.

AI is not about doing more with less. It is about doing more with what you have, provided what you have includes skilled people, clear outcomes, and the discipline to review what the machine produces before it reaches production.

Let's Continue the Conversation

I write about AI, marketing strategy, and digital education for practitioners and students. If this post was useful, the best thing you can do is connect: I share analysis like this regularly on LinkedIn, and you can explore more on the site.

2 thoughts on “AI Leverage vs Shortcuts: Anthropic’s Growth Engine and Amazon’s Breaking Point”

Harish, Your hired!

That is the clearest illumination of how to use AI productively I’ve seen.

Please help me apply these principles to my business and too my work at the college!

Thank you so much, Layth! That genuinely means a lot coming from you. The principles are surprisingly simple once you strip away the hype: start with the outcome you want, build a workflow around it, and keep a human in the loop at every critical decision point. Looking forward to connecting with you.